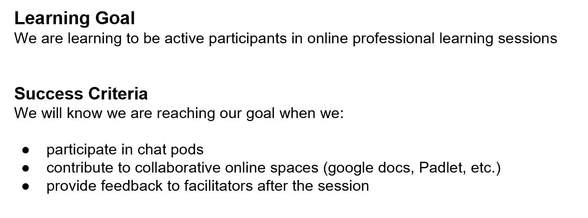

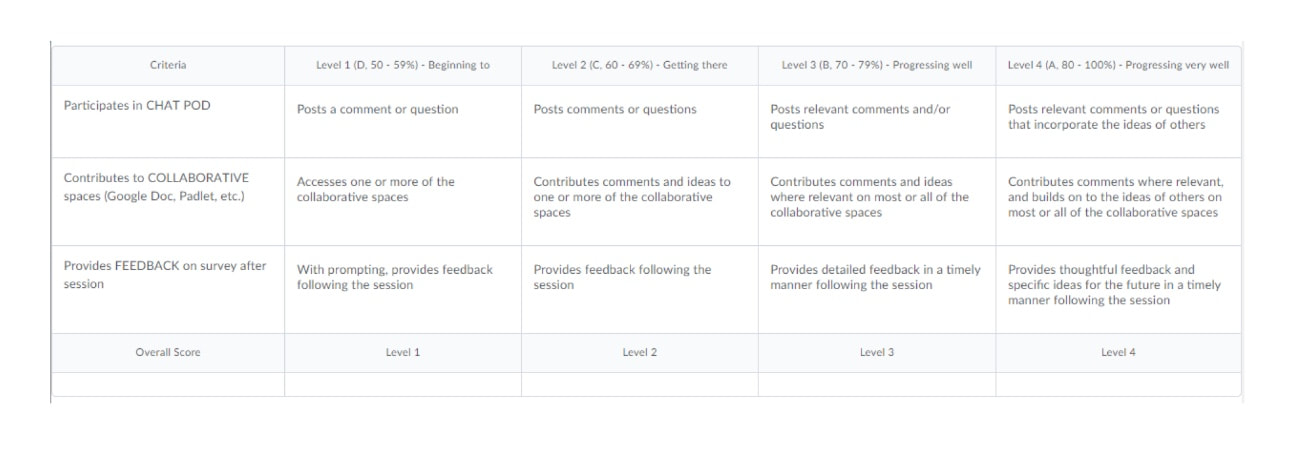

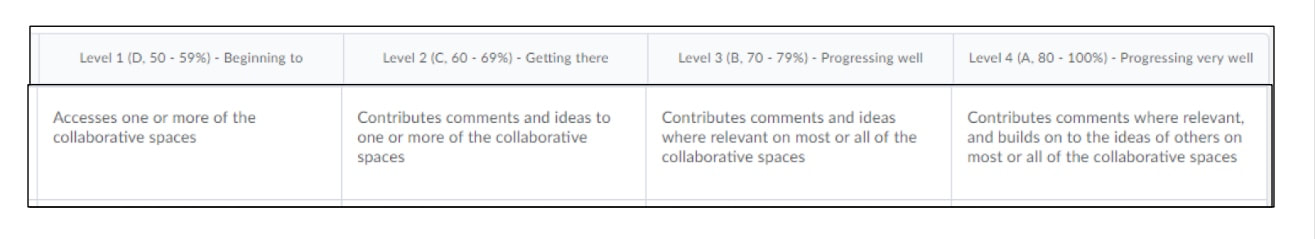

I‘m excited, and have been preparing to practise what I preach in terms of using and sharing clear learning goals and success criteria, triangulating (and diversifying!) assessment, and offering variety and choice in learning activities. My first sessional at Holland College will — I hope — be as engaging and practical for my students as it is for me to prepare for it!

As I work to bring to life my syllabus for Communication and Technology in the Arts, the blended course I’ll be facilitating for first-year college students in the Fundamental Arts program this September, I am struck by an interesting realization: Once again, I‘ll be teaching the same „grade“ as my own children!

This will actually mark the third time in my career that I‘ll be working with students who are the same age as Alex and Simon. The first time I played this game was the year I taught a Grade 3 class: At the time, my own babies were in Grade 3, too (albeit at a different school), and I often compared mental notes to see how aligned my classroom was with theirs, developmentally. The edu-stars aligned again a few years later, when I moved from a Grade 7 & 8 Math and Science gig to Grade 6 Core… the same year Simon and Alex moved into Grade 6! And once again, I followed with interest what their respective teachers were up to and compared it to my own teaching and learning journey that year. (The boys even came to visit my students one day, as their school board and mine had different PD day schedules.)

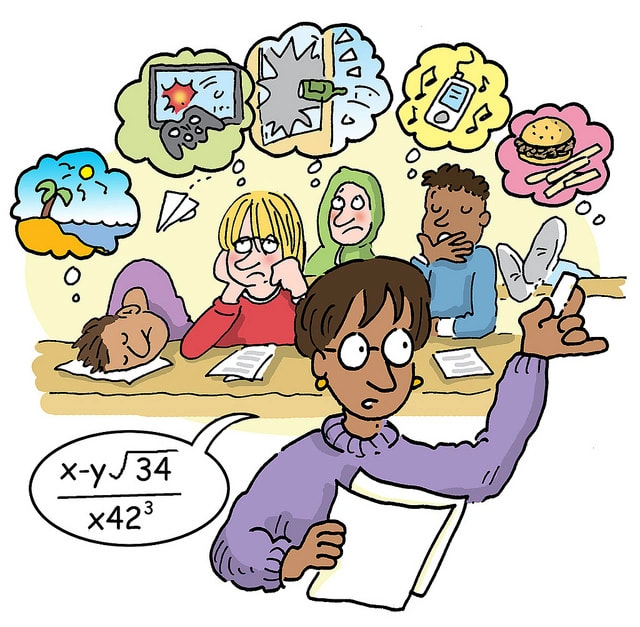

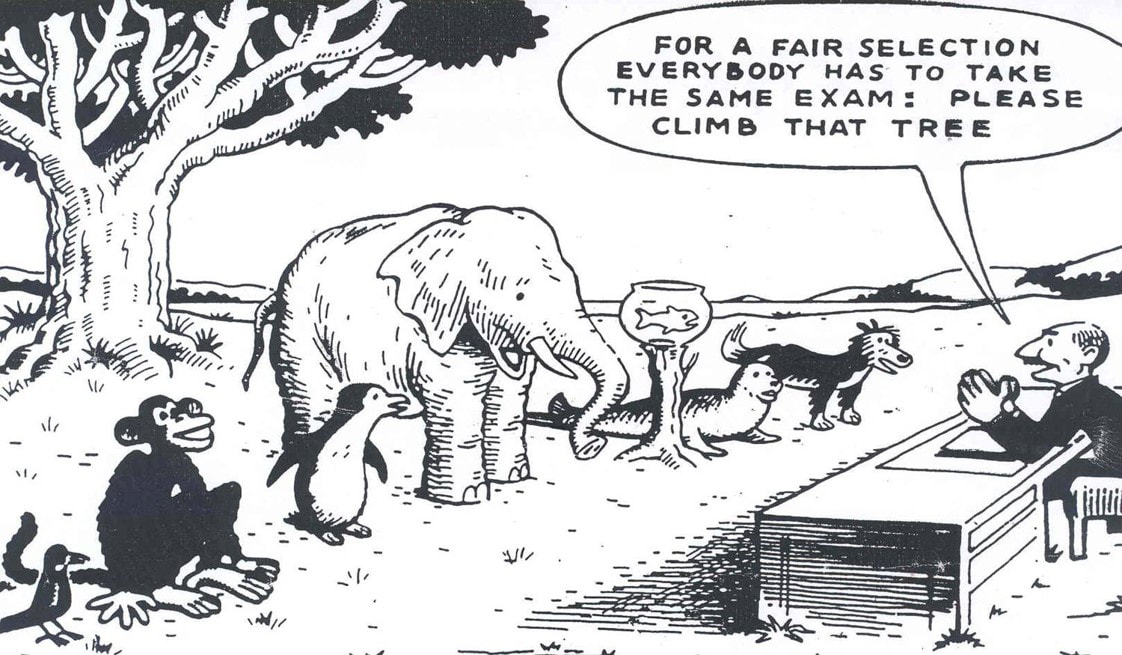

Having children — especially twins — the same age as most of your students is quite helpful as a teacher. It’s even better if at least one of them has some sort of learning „issue“, as mine do. You get a daily sample of what 8 (or 12, or 18!) looks like. What‘s „normal“, and what you can reasonably expect from your students. You also get reminded that your students are someone‘s baby!! Just as I love and care for and think about my two all the time, so someone else is loving and caring about the emotional welfare of the bodies in my classroom! This is a good reminder in moments of struggle, where a student doesn‘t understand something, or is needing extra organizational support with their schoolwork, or whatever. As a parent, I think, „how would I want my child‘s teacher to engage with my child in a time like this?“ And as that student‘s educator, I can act accordingly.

It‘s a reality that doesn‘t change just because they‘re in post secondary now: If the students who show up in my class in September went through half the logistical drama this spring/summer of signing up for everything and getting all the fees paid for on time, then I respect them for the miracle of arriving at the right place at the right time on Day One!

RSS Feed

RSS Feed